- Blog

- Ms word for mac student

- Software compatible with neat scanner for mac

- Xld download os x

- Firestarter apk work for amazon fire tv 2015

- Backyard baseball 2003 scummvm

- Microsoft office crashes when saving

- Command conquer generals zero hour download tpb

- Ellen beyonce single ladies

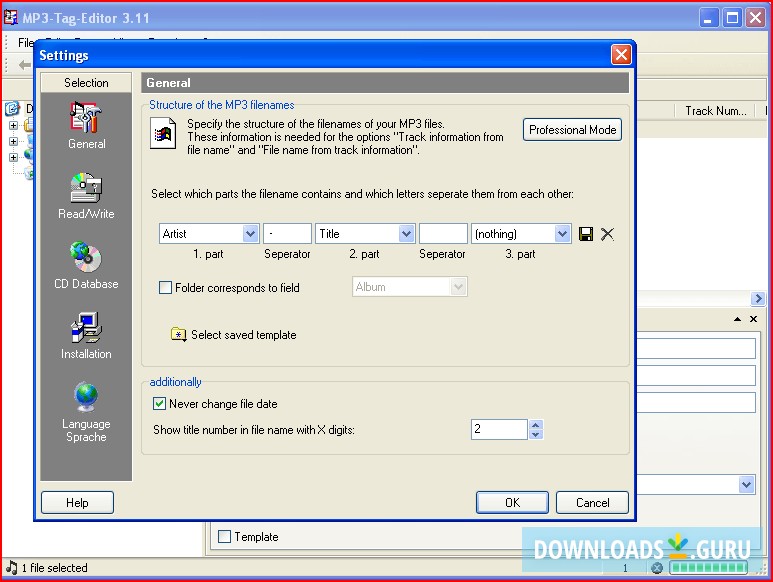

- Best video metadata editor

- Hindi movie kick full movie online

- Transporter refueled movie poster 2015

- Is star wars force awakens book canon

- Best two player video games for wife and i

- Game balap mobil terbaik 2015

- Madden 08 pc download windows 10

- Hardware and software requirements for skype

- How to download access on mac iuanware

- How to use reimage cleaner to get rid of malware on ios

- Lds the testaments movie

- Dolby digital plus audio driver download

- Sega emulator online ren and stimpy

- Hp laserjet 1536dnf mfp scan software

- 2019 affinity photo reviews

- Nicki minaj kanye west dark fantasy

- Cinema 4d cracked onhax

- Ebay desktop timer app windows 10

- Crack photoshop cs6 ma

- Ww kimball baby grand piano

This is also not straight forward and needs a common synch point to identify the variances between clocks. Wrt to your point about having to synch different clocks being difficult, I agree but what I have done in the past with stills photos to get all in correct time/date order is simply adjust all the metadata in photos from one camera to align with the one master camera. It is clear that professionals and industry have thier own needs well sorted and i suppose thats all they are concerned about so perhaps my "obvious" is limited to others like me. I suppose my point about being "Obvious' was based on the perspective of a amateur or occasional videographer being able to work easier with multiple files. WRT to your point about my OP based on my workflow, I was just seeking to get greater understanding and your response helped with that objective. pause or stop isn't used).So in post it is a simple ONE POINT SYNC, or use gen-lock to overcome battery or lens changes.Many setups even put a dedicated camera on the game clock to assist with correlation to that for ease>another thing metadata wouldn't solve.Īs a science example, those who film asteroid-star occultations from different locations record timing radio/satellite broadcast into the audio for precise calibration.įWIW, it is a little forward to speculate in your OP on why video should have the required data but then post on an internet forum and expect to get responses from industry decision-makers on why they didn't design the cameras based on your speculative ideal workflow. Pieces for broadcast (like sporting events) are mostly produced on-the-fly or are shot with cameras that continue to roll (i.e. For example I just viewed one clip produced with a scene of stars settings in the landscape that was reversed, but only obvious to someone aware of how constellations travel across the sky.For the most part time-shot metadata to an editor is usually worthless. Many pieces are shot out-of-sequence, different angles and secondary shots might happen on completely different days. Many edits in the industry have nothing to do with time they were shot. One thing I'll offer that you may have overlooked,Įxcept for more rare scientific requirements for time accuracy, (or ones solved with gen-lock), ^The industry uses gen-lock as a solve, because such will sync time and eliminate drift that can happen with individual differences between equipment. Your cameras would all have to be synchronized as far as initial time setting (to frame accuracy) and have no drift in system clocks/data encoding.The usual human reaction time is 1/10th of a second, so good luck trying to manually "synchronize watches" to that level of accuracy.Or maybe embed all devices with GPS-based clocks/stamping. Here's a concept that should be an "obvious" requirement for it to work: I'm curious why the reasons don't seem "obvious" to you? However, your question presumes a particular workflow that appears to seem "obvious to you," but is not one that the industry uses, for a number of reasons. Sure, it'd be great if all video cameras at least saved an XML file with the video (as to overcome differences in video format) of desired metadata, but there is no standard of such yet.Don't forget that such would also be needed in a video output stream since many use external video recorders.

We can all speculate, I am seeking to understand real industry reasons for these omissions.

I would prefer responses based on known knowledge or reasons rather than speculative opinion. For example in something like a yacht race, it would be very difficult to tell which clip came before or after annother just by looking at it. Having a time stamp on each frame would help a lot when trying to ensure correct time continuity.

This seems so obvious to me I cannot understand why it is not readily available and widely used by Videographers putting together a story from multiple go pros or other cameras recording a common event. Reduced need for Clapper boards or the like. This would allow the time order synchronisation for editing of multiple clips from multiple cameras to be more easily time aligned in software.

#Best video metadata editor iso

Can anyone enlighten me as to why metadata on Video files is so limited and why seemingly "obvious" info is missing.įor example, basic info like ISO settings when taking the video, the Camera details including lens settings and shutter speed.Īdditionally, I am also perplexed as to why the time/date data from the camera is not recorded as metadata against each frame.